一、LDA主题模型简介

LDA主题模型主要用于推测文档的主题分布,可以将文档集中每篇文档的主题以概率分布的形式给出根据主题进行主题聚类或文本分类。

LDA主题模型不关心文档中单词的顺序,通常使用词袋特征(bag-of-word feature)来代表文档。词袋模型介绍可以参考这篇文章:

其中,类似Beta分布是二项式分布的共轭先验概率分布,而狄利克雷分布(Dirichlet分布)是多项式分布的共轭先验概率分布。

如果我们要生成一篇文档,它里面的每个词语出现的概率为:

更详细的数学推导可以见:用python对单一微博文档进行分词——jieba分词(加保留词和停用词)_阿丢是丢心心的博客-CSDN博客_jieba 停用词)

我下面的输入文件也是已经分好词的文件

1.导入算法包

import gensim

from gensim import corpora

import matplotlib.pyplot as plt

import matplotlib

import numpy as np

import warnings

warnings.filterwarnings('ignore') # To ignore all warnings that arise here to enhance clarity

from gensim.models.coherencemodel import CoherenceModel

from gensim.models.ldamodel import LdaModel

2.加载数据

先将文档转化为一个二元列表,其中每个子列表代表一条微博:

PATH = "E:/data/output.csv"

file_object2=open(PATH,encoding = 'utf-8',errors = 'ignore').read().split('\n') #一行行的读取内容

data_set=[] #建立存储分词的列表

for i in range(len(file_object2)):

result=[]

seg_list = file_object2[i].split()

for w in seg_list : #读取每一行分词

result.append(w)

data_set.append(result)

print(data_set)

构建词典,语料向量化表示:

dictionary = corpora.Dictionary(data_set) # 构建词典 corpus = [dictionary.doc2bow(text) for text in data_set] #表示为第几个单词出现了几次

3.构建LDA模型

ldamodel = LdaModel(corpus, num_topics=10, id2word = dictionary, passes=30,random_state = 1) #分为10个主题 print(ldamodel.print_topics(num_topics=num_topics, num_words=15)) #每个主题输出15个单词

这是确定主题数时LDA模型的构建方法,一般我们可以用指标来评估模型好坏,也可以用这些指标来确定最优主题数。一般用来评价LDA主题模型的指标有困惑度(perplexity)和主题一致性(coherence),困惑度越低或者一致性越高说明模型越好。一些研究表明perplexity并不是一个好的指标,所以一般我用coherence来评价模型并选择最优主题,但下面代码两种方法我都用了。

#计算困惑度

def perplexity(num_topics):

ldamodel = LdaModel(corpus, num_topics=num_topics, id2word = dictionary, passes=30)

print(ldamodel.print_topics(num_topics=num_topics, num_words=15))

print(ldamodel.log_perplexity(corpus))

return ldamodel.log_perplexity(corpus)

#计算coherence

def coherence(num_topics):

ldamodel = LdaModel(corpus, num_topics=num_topics, id2word = dictionary, passes=30,random_state = 1)

print(ldamodel.print_topics(num_topics=num_topics, num_words=10))

ldacm = CoherenceModel(model=ldamodel, texts=data_set, dictionary=dictionary, coherence='c_v')

print(ldacm.get_coherence())

return ldacm.get_coherence()

4.绘制主题-coherence曲线,选择最佳主题数

x = range(1,15)

# z = [perplexity(i) for i in x] #如果想用困惑度就选这个

y = [coherence(i) for i in x]

plt.plot(x, y)

plt.xlabel('主题数目')

plt.ylabel('coherence大小')

plt.rcParams['font.sans-serif']=['SimHei']

matplotlib.rcParams['axes.unicode_minus']=False

plt.title('主题-coherence变化情况')

plt.show()

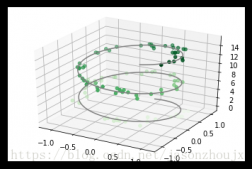

最终能得到各主题的词语分布和这样的图形:

5.结果输出与可视化

通过上述主题评估,我们发现可以选择5作为主题个数,接下来我们可以再跑一次模型,设定主题数为5,并输出每个文档最有可能对应的主题

from gensim.models import LdaModel

import pandas as pd

from gensim.corpora import Dictionary

from gensim import corpora, models

import csv

# 准备数据

PATH = "E:/data/output1.csv"

file_object2=open(PATH,encoding = 'utf-8',errors = 'ignore').read().split('\n') #一行行的读取内容

data_set=[] #建立存储分词的列表

for i in range(len(file_object2)):

result=[]

seg_list = file_object2[i].split()

for w in seg_list :#读取每一行分词

result.append(w)

data_set.append(result)

dictionary = corpora.Dictionary(data_set) # 构建词典

corpus = [dictionary.doc2bow(text) for text in data_set]

lda = LdaModel(corpus=corpus, id2word=dictionary, num_topics=5, passes = 30,random_state=1)

topic_list=lda.print_topics()

print(topic_list)

for i in lda.get_document_topics(corpus)[:]:

listj=[]

for j in i:

listj.append(j[1])

bz=listj.index(max(listj))

print(i[bz][0])

同时我们可以用pyLDAvis对LDA模型结果进行可视化:

import pyLDAvis.gensim pyLDAvis.enable_notebook() data = pyLDAvis.gensim.prepare(lda, corpus, dictionary) pyLDAvis.save_html(data, 'E:/data/3topic.html')

大概能得到这样的结果:

左侧圆圈表示主题,右侧表示各个词语对主题的贡献度。

所有代码如下:

import gensim

from gensim import corpora

import matplotlib.pyplot as plt

import matplotlib

import numpy as np

import warnings

warnings.filterwarnings('ignore') # To ignore all warnings that arise here to enhance clarity

from gensim.models.coherencemodel import CoherenceModel

from gensim.models.ldamodel import LdaModel

# 准备数据

PATH = "E:/data/output.csv"

file_object2=open(PATH,encoding = 'utf-8',errors = 'ignore').read().split('\n') #一行行的读取内容

data_set=[] #建立存储分词的列表

for i in range(len(file_object2)):

result=[]

seg_list = file_object2[i].split()

for w in seg_list :#读取每一行分词

result.append(w)

data_set.append(result)

print(data_set)

dictionary = corpora.Dictionary(data_set) # 构建 document-term matrix

corpus = [dictionary.doc2bow(text) for text in data_set]

#Lda = gensim.models.ldamodel.LdaModel # 创建LDA对象

#计算困惑度

def perplexity(num_topics):

ldamodel = LdaModel(corpus, num_topics=num_topics, id2word = dictionary, passes=30)

print(ldamodel.print_topics(num_topics=num_topics, num_words=15))

print(ldamodel.log_perplexity(corpus))

return ldamodel.log_perplexity(corpus)

#计算coherence

def coherence(num_topics):

ldamodel = LdaModel(corpus, num_topics=num_topics, id2word = dictionary, passes=30,random_state = 1)

print(ldamodel.print_topics(num_topics=num_topics, num_words=10))

ldacm = CoherenceModel(model=ldamodel, texts=data_set, dictionary=dictionary, coherence='c_v')

print(ldacm.get_coherence())

return ldacm.get_coherence()

# 绘制困惑度折线图

x = range(1,15)

# z = [perplexity(i) for i in x]

y = [coherence(i) for i in x]

plt.plot(x, y)

plt.xlabel('主题数目')

plt.ylabel('coherence大小')

plt.rcParams['font.sans-serif']=['SimHei']

matplotlib.rcParams['axes.unicode_minus']=False

plt.title('主题-coherence变化情况')

plt.show()

from gensim.models import LdaModel

import pandas as pd

from gensim.corpora import Dictionary

from gensim import corpora, models

import csv

# 准备数据

PATH = "E:/data/output1.csv"

file_object2=open(PATH,encoding = 'utf-8',errors = 'ignore').read().split('\n') #一行行的读取内容

data_set=[] #建立存储分词的列表

for i in range(len(file_object2)):

result=[]

seg_list = file_object2[i].split()

for w in seg_list :#读取每一行分词

result.append(w)

data_set.append(result)

dictionary = corpora.Dictionary(data_set) # 构建 document-term matrix

corpus = [dictionary.doc2bow(text) for text in data_set]

lda = LdaModel(corpus=corpus, id2word=dictionary, num_topics=5, passes = 30,random_state=1)

topic_list=lda.print_topics()

print(topic_list)

result_list =[]

for i in lda.get_document_topics(corpus)[:]:

listj=[]

for j in i:

listj.append(j[1])

bz=listj.index(max(listj))

result_list.append(i[bz][0])

print(result_list)

import pyLDAvis.gensim pyLDAvis.enable_notebook() data = pyLDAvis.gensim.prepare(lda, corpus, dictionary) pyLDAvis.save_html(data, 'E:/data/topic.html')

有需要自取~

还可以关注我,之后我还会发更多关于数据分析的干货文章~

到此这篇关于LDA主题模型简介及Python实现的文章就介绍到这了,更多相关内容请搜索服务器之家以前的文章或继续浏览下面的相关文章希望大家以后多多支持服务器之家!

原文地址:https://blog.csdn.net/weixin_41168304/article/details/122389948